Pinto beans and cornbread

Note: Mita Williams writes an excellent weekly newsletter–University of Winds–that she always ends with a question to her readers. This week’s was a request to send our favourite bean recipe as her family acquired an InstantPot over the holidays. I don’t have much of a recipe to offer, but I periodically make a particular meal and the story of why I eat this food occurs to me every time I do. I’ve thought about it more in recent years and it’s probably a good thing for me to write it down. I haven’t written much of anything on this blog in ages other than conference notes.

Ugh, spare me the narrative and skip to the recipe already.

My family moved to Grand Junction, Colorado when I was in grade five, a small city in Western Colorado about four or five hours from Denver and Salt Lake in either direction. My father, an engineer-turned-minister (who ultimately went back to engineering; that’s a story), had taken a job as the dean of a small evangelical college there after starting a church in central Ohio and I suspect burning out a bit by that task. Grand Junction has changed a great deal in the intervening decades, but then as now there are a fair number of people there living in very modest ways.

In the church we attended there, we met a man named John Ball who was an esteemed and influential elder in that congregation. John was older, perhaps retired, maybe around 70. About a year after we moved there, he and his wife invited us over for dinner. Given his role and authority in the church, I probably expected them to have a house larger and nicer than ours. To my perhaps eleven or twelve year old self, their house was cozy, quaint, and remarkably tidy; as I’ve reflected on this experience over the years, I realize it was very small and modest. What really made an impression on me was the meal they served us: pinto beans and cornbread. As a preacher’s son, I already had experienced scores of dinner invitations and had figured out that people (we did it, too) really put on a show when guests come over. Better cuts of meat, fancy desserts, etc. Pinto beans and cornbread deviated from that pattern. Then I ate it, and fell in love. I seem to recall that John had some funny (and I realize now, defensively self-deprecating) name for the beans, perhaps Spanish strawberries. I didn’t care what it was called, but it was delicious.

I didn’t eat this again for many years, but eventually it became a mainstay for me and I introduced my family to it and they enjoy it as well. I don’t know if we eat anything else that provides so much comfort, sustenance, and nutrition for so little money, but it was reflecting on that last point a few years ago that made me think back to John Ball. On a lark, I just googled him and was surprised to discover he co-founded the college that hired my father. Huh.

Instant Pot Pinto Beans

Long story short, here’s the recipe. For the cornbread, I typically use the recipe in the original Moosewood Cookbook although I rarely make it exactly as described (e.g., always oil, not butter, because it’s easier). The Internet does not need another cornbread recipe.

Quantities as you wish. I challenge everyone to make this without regard for measures or writing anything down. It cannot come out badly.

Sort, wash, and soak some pinto beans. Overnight is best, but morning to evening is fine.

Use the sauté setting to sweat an onion, some garlic, and perhaps a red pepper (nothing green goes near this in our house, which is the corollary to the commandment that nothing red goes in guacamole) in a bit of oil or lard if you dig swine and want richness. Once tender, toss in ground red pepper or pepper flakes to suit your needs, some cumin (not too much; this isn’t chili), maybe a bit of ground coriander, some paprika, and a bay leaf or two. While the onions and garlic are sweating, drain and wash the beans, dumping the wash water, then add them to the IP. Since they’re soaked, adding water until they’re just covered is fine but add more if soupier is desired. Cook at high pressure for 20-25 minutes depending on how long they’ve soaked and how long they’ve been in the pantry. Oh, if you have any of dried ancho, chipotle, poblano, etc. chile pods laying around, seed them and toss them in, removing them when done. This is a trick I started doing with the IP and, oh my, good call. I always buy them in those huge bags because I like the look and idea of them, but this is how I actually make progress on them. Salt and pepper to taste, as with anything.

If too soupy when done, either dump some of the water (travesty!) or just take a masher and squish up some of the beans to thicken it. Eat with cornbread and toss in some sour cream, Valentina, plain yogurt, or whatever. Make this your dish.

CNI 2019 Spring Membership Meeting

Many thanks to CNI’s staff and leadership for a wonderful and engaging event. At this iteration of CNI, I took part in their series of Executive Roundtables, this one exploring how institutions are moving services and infrastructure to the cloud. At a Canadian institution, this is of particular interest given that most major cloud vendors are U.S.-based. I look forward to the summary report that CNI will issue based on all of our input on the topic.

Of the talks below, I would highlight Herbert van de Sompel and Martin Klein’s talk on archiving the bits of intellectual property that researchers sprinkle around the Web in the course of their work. I’ve already raised it several times in the last week in conversations with faculty and administrators here and it finds immediate resonance.

A Research Agenda for Historical and Multilingual OCR

Ryan Cordell – Northeastern U

OCR quality was diminishing the efficacy of text mining research. Led to a report on the state of OCR with an eye toward steps that could be taken to make it work better.

Cordell noted that we tend not to think about OCR when it’s working or when we perceive it to be working well enough. Showed an example from a Vermont newspaper illustrating just how poor OCR can be. Mentioned the Trove example in Australia, which offers users the ability to transcribe newspapers. Great work, but does not scale, at all. Read more…

CNI 2018 Fall Membership Meeting

CNI was information dense as always. Below are the notes I recorded. Mistakes and leaps in logic are mine, not the speakers’.

CNI was information dense as always. Below are the notes I recorded. Mistakes and leaps in logic are mine, not the speakers’.

It has been clear for a number of years that CNI is growing in popularity; at the opening session Cliff Lynch noted about a half dozen new members who have joined just since the spring membership meeting. For many of us who have been CNI regulars for years, this is not surprising. Cliff, Joan, and the fantastic CNI staff organize stellar membership meetings and in general run a very solid and communicative organization.

Monday

From Talking to Action: Fostering Deep Collaboration Between University Libraries, Museums, and IT

Susan Gibbons, Dale Hendrickson, Louis King, Michael Appleby – Yale U

Gibbons stressed that if these large and established entities can collaborate, it can be done at other places. Her role expanded in 2016 to include the title of Deputy Provost for Collections. Read more…

Access 2018 in Hamilton

As always, Access was a delight to attend. I am so grateful to my former colleagues and still friends at McMaster, who carried on with the planning after I put my hand up at last year’s wonderful event in Saskatoon and volunteered to bring it to Hamilton, only to subsequently depart for the University of Alberta. I had the impression this year that both the audience and the list of speakers showed the result of years of study progress toward diversifying Access both topically and in terms of gender. This impression was confirmed when I went back and looked at my notes from previous Access conferences, where the preponderance of men isn’t difficult to spot. Not suggesting at all that there isn’t more work to do, as our keynote speakers so eloquently and forcefully underscored for us.

Onward to the notes I took. As always, all errors are mine, all brilliance theirs. Read more…

A Primer on Library Titles

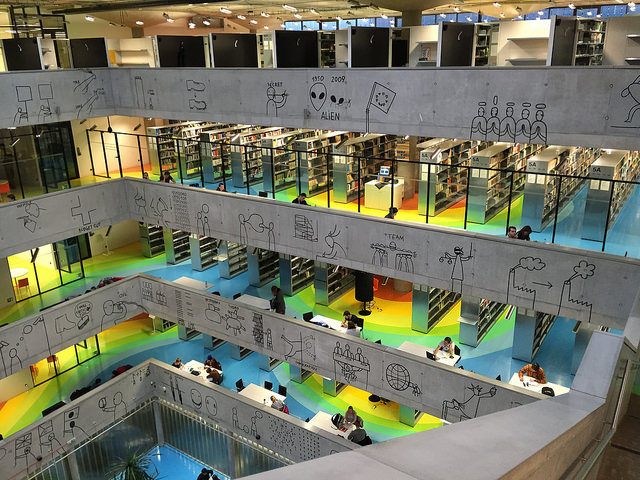

National Technical Library, Prague

For those of us working in academic libraries, the myriad roles in our organizations are generally fairly clear, but for the rest of our institution it seems that our internal structures and designations remain unclear. What follows is an attempt to provide some clarity about the roles in libraries. It might help to offer some caveats to keep things on track here. First, there are surely exceptions to nearly everything I will write below. I acknowledge that, but rather than dwell on exceptions the goal here is to provide a generally applicable overview. The other caveat is that libraries and the library profession are undergoing some fairly significant structural changes. I’ll try to capture some of this shifting without overly muddying the waters.

Perhaps the easiest place to start is at the top of the organization. Academic libraries are somewhat akin to faculties or colleges in structure, so not surprisingly the role at the top is often known as dean of libraries. Depending on the institution, however, other titles may be university librarian (very common at large libraries), chief librarian, or director (less common in larger research libraries but fairly common at non-doctoral institutions). Perhaps adding to the confusion is that at many institutions these roles may have two titles, e.g., vice provost and university librarian. No matter the title, this person occupies the top spot on the organizational chart and generally reports directly to a vice president of the university, most commonly the VP academic / provost, although at some institutions the university librarian may report to the VP research or another VP portfolio. What this means is that they sit at the same level in the university hierarchy as the deans of faculties and colleges, generally serving on dean’s council or similar executive bodies.

It is important to stress here that there is one and only one university librarian at nearly all institutions. I’ve heard of examples of academic libraries that call all of their permanent librarians university librarians, but this is exceedingly rare. In other words, when one sees the title university librarian, it is correct to assume that one is dealing with someone parallel to a dean. Inside the library world, we generally call someone with the university librarian title a UL.

Academic libraries at universities, i.e., those with more than 30 or so employees (so not most U.S. liberal arts colleges, for example) will generally have a senior leadership team that consists of the university librarian/dean/director and one or more associate university librarians/deans/directors. These individuals are colloquially known as AULs or ADs and their number roughly corresponds to the size of the library and its staff cohort. My employer, a major research library yet relatively small by the standards of the Association of Research Libraries, an organization representing the 120 or so largest research and academic libraries in North America, has three AULs. Other institutions may have as few as one AUL or AD while larger libraries may have five or even more. These AULs or ADs most commonly have non-overlapping portfolios, i.e., various departments or individuals report directly to an AUL, although projects and tasks often cross the portfolio boundaries. Typical titles one might hear would be AUL for Public Services or AD for Research Engagement and Scholarly Communication; what comes after AUL or AD is an attempt to describe the scope of responsibility more or less clearly. As these individuals report to a UL or dean/director, they are most closely analogous to an associate dean.

The UL/dean/director and AULs/ADs together form the core of an academic library’s leadership or executive team. Some libraries include other individuals in this group, typically those that have a direct reporting line to the UL/dean/director, such as directors of finance, human resources, and/or advancement. Currently, I would estimate that well over 90% of ULs/deans/directors and their associate-level colleagues are professional librarians, in other words they have the terminal degree for the profession, commonly referred to as the MLS or MLIS (master of library and information science) although it often goes by a different name these days depending on the granting institution.

This is a good spot to clarify a very simply yet often misunderstood point about libraries. A common faux pas among members of the campus community–I’ve heard this from the youngest undergraduates and the most experienced faculty and administrators in my career–is to refer to anyone who works in a library as a librarian. I point this out not to establish a classist hierarchy–I consequently reject any such stratification–but to make clear that librarian is a professional designation based on a specific education. To attempt to make this clearer, I would point out that doctors are not nurses nor vice versa. Both are professions in their own right, with their own unique educational standards and roles in health care.

I would be negligent not to point out that there are individuals working in academic libraries with the librarian title who lack the aforementioned library degree. This is not a new phenomenon but rather goes back many decades. Individuals in such roles most often have a PhD and found their way into libraries based on specific subject expertise from their PhD program. Some of my librarian colleagues at Yale fell into this category. Since the founding of the Council on Library Resources’ (CLIR) postdoctoral fellow program in the mid-aughts, we have witnessed an uptick in the number of PhD holders without an MLS assuming librarian roles, a few even at the UL/AUL level. This has led to some pushback although that seems to have calmed somewhat in recent years. Many libraries, including my current employer, still require that any role with the title librarian be filled by someone with an MLS.

The percentage of a library’s staff that holds the title and rank of librarian varies widely from institution. I would suggest that the librarian cohort typically forms 20-40% of the total staff. At many institutions, librarians hold faculty status and pass through an up-and-out tenure system similar to that for faculty. At others, librarians may have ranks and fairly high job security with permanent (open-ended) positions, but lack faculty status. I’ve worked at two institutions with faculty status (and earned tenure) and at three without and could probably write a novel-length treatise on the pros and cons of either situation. It’s a touchy topic for many librarians so I will delicately skirt it here.

Beyond librarians, things become somewhat less predictable in terms of nomenclature, but one can see fairly common staffing trends. Most libraries have a number of individuals in managerial or professional roles that do not require an MLS but rather require other professional qualifications. The aforementioned human resources and financial roles would be two examples, as would a number of IT management positions in many libraries. Similarly, a role requiring a high degree of proficiency in a specific skill, such as GIS or data analysis, may well fall into this category. This group is most often smaller than the librarian cohort but often fills similar managerial roles.

Numerically speaking, the largest cohort in most academic libraries will be what we often refer to somewhat loosely as library staff, which can be quite confusing for those outside of libraries since everyone who works in the library is by a broader definition part of the staff. These roles go by various designations, including library assistant, library technical assistant, or library technician. In Canada there are often college-level programs that offer certificates that qualify one for such a role; in my experience this is not the case in the United States (where I started in libraries as a library technical assistant). When one walks up to a service point of any type in a modern academic library, it is most likely that one will first encounter a staff member in this category, as they often fill what we refer to as front-line service positions. Their work is also critical in areas such as interlibrary loan, collection management, IT support, acquisitions, archival processing, and many others.

The synergistic contributions of all of the people working in libraries is what makes them successful as organizations. Having various types of employees with a wide range of credentials and training enables us both to remain solidly grounded in our traditional obligations as well as to branch out into new areas. It is also worth noting that many people working currently as librarians previously held roles of other types in libraries; similarly it is not uncommon to find individuals with an MLS working in non-librarian roles due to the general paucity of librarian roles and the number of MLS graduates.

Hopefully this relatively brief excursus helps our university colleagues beyond the library understand how we staff libraries and what our various titles and designations signify. Please feel free to ask questions and/or to offer feedback. I’d like to treat this post as a living document that evolves as libraries change.

CNI Fall 2017 Membership Meeting notes

CNI was informative and enriching as always. I had the opportunity this time around to participate in the executive roundtable on moving data to the cloud and enjoyed that environment as always.

At this event, I chose to take most of my notes using a pen and paper, after reading some thoughts on this from Mita Williams and viewing a video on the topic she shared. I found that I took more copious notes when using the pen yet could follow talks much more closely. Also, with my laptop closed, I wasn’t tempted to check email or look up various bits related to the talk. It was kind of refreshing and this might become a new habit.

Archival Collections, Open-Linked Data, and Multi-modal Storytelling

Andrew White – Rensselaer Polytechnic

Have multiple digital archive platforms running in parallel, Digitool, Archon, Inmagic Genie, all end-of-life systems. Right now, they scrape data out of these various tools, augment it, and present information, e.g., on historical buildings, via static HTML pages. Read more…

@Risk North in Ottawa

cc Asif A. Ali, flickr

@Risk North was a further meeting of an initiative that the Center for Research Libraries kicked off a while back to address issues related to print preservation. I attended after reading the program and realizing that we can no longer talk about print preservation without including digital preservation and the myriad points of intersection in these two critical responsibilities of libraries.

Approaching the Long-Term Preservation of Print Documentation: A Current Overview of International Models, Challenges and Opportunities

Constance Malpas, OCLC

Walked through an analysis of print book collection density. A vast percentage of books reside in economic mega-regions. In Canada, there are five centres outside of those regions that have significant collections: Winnipeg, Edmonton, Calgary, St. John’s, and Halifax.

Noted that there is a great deal of overlap between institutions, but also a lack of overlap, i.e. many titles that are only held in a small number of institutions. The more duplication you have, though, the easier it is to motivate libraries to participate in collaborative ventures because it facilitates space reclamation.

Scarcity is common in research collections; while scaled collaboration addresses that, it doesn’t always scale linearly because there is so much overlap between collections. Academic institutions are the de facto custodians of the scholarly record, i.e. this is where the books reside. Elements that drive this are shared bibliographic infrastructure, as these “reduce noise” in collections data. Trust relationships between institutions, including those that include mutual borrowing, are also an important element of making collaboration work.

Spoke a bit about European models, which as anyone familiar with that context would attest are far beyond what we have accomplished in North America. Many examples from many countries where national solutions have come to the fore.

She spoke about drivers of this work. One is shared bibliographic standards and platforms. Another facet is specializing further, i.e. “collecting more of less.” In other words, collaborative collection development as it has been long discussed and strategized. The third element was “explicit commitments,” or moving commitments beyond the institutional level. The last is reciprocal access: “create locally, share globally.”

@Risk and National Coordinated Efforts in Print Preservation in the United States

Bernard Reilly, Center for Research Libraries

Noted briefly the difference between a Memorandum of Understanding and a contract when it comes to shared print initiatives. He noted that some of these have a 25-year commitment time frame, and he asks which director can say that in 25 years their space will remain the same.

Showed graphics indicating how much of the serial record in the social sciences and humanities has been preserved. “It’s a modest start” as he noted.

Quoted Hazen who said our future is digital.

Speaking of shared collections, he quipped that if the shark isn’t swimming, it dies. These cannot be static collections but must be developed and maintained. Also noted that preservation and electronic access must merge; without digital delivery this doesn’t work going forward. We also need consensus on shared print storage. We need to develop an effective narrative to communicate what we are doing to scholars and funders.

National Heritage Collections: Perspectives on Mandated Collecting

Maureen Clapperton, Bibliothèque et Archives nationales du Québec

Quebec’s national library collects in their Laurentiana collection publications about Quebec from the rest of the world, as well as other materials related to Quebec. They have a legal deposit requirement, but also receive materials through voluntary deposit and donations as well as purchase materials.

They accept everything received via legal deposit, and these materials never leave the collection.

Monica Fuijkschot, Library and Archives Canada

LAC commits to holding last copies of Canadiana. She outlined their other principles, which relate to description, storage, lending, perpetuity, and transfer (of deaccessioned materials to other institutions). They will lend their materials, but only in instances when they are the only possible source.

The Last Copies Initiative arose in the broader library community. The National Union Catalogue they maintain currently has 30 million records in it and is migrating to an OCLC platform over the next two years, with release this January of the public catalogue.

Current Canadian Initiatives in Collective Print Preservation

Scott Gillies, TUG Libraries; Doug Brigham, COPPUL; Caitlin Tillman & Steve Marks, Keep@Downsview; Alan Darnell, Scholars Portal

Gillies and Brigham described their existing shared print activities in TUG and COPPUL, respectively. TUG is of course well established, while COPPUL SPAN (Shared Print Archive Network) started in 2012. SPAN’s first focus was low-risk print journals, i.e. digital equivalents with post-cancellation access rights. In their second phase, they began to focus on other journals, including some Canadian journals, as well as some without post-cancellation access rights. In the third phase, they looked at earlier and later titles from their second phase, some of which were at high risk (379 titles). Now, in their fourth phase, they are looking at serials and monographs from Statistics Canada, ongoing. In each phase, archive holders agree to hold the title until a specified date.

Keep@Downsview started as a conversation between the provosts at Queen’s and U of Toronto. Keep expands the scope of shared collections by adding humanities and social science content, since other projects often emphasize STEM serials. Lesson learned: matching metadata between institutions is really hard. How to remedy that: share metadata better between institutions. Marks noted that by linking print and digital preservation practices we help mitigate myriad risks and we also open up the doors to other opportunities: better discovery, better accessibility, other scholarly uses, etc. Not least, these benefits might get people enthused about putting materials into shared preservation facilities.

Darnell argued initially that format silos (print vs. electronic) have created preservation silos, i.e. separate activities.

Access 2017 Saskatoon

Lovely view from the conference room

After a long hiatus while on research leave, I’m back out on the road getting in touch with the profession and taking notes. Access is both a favourite and an excellent reentry path given its warm and friendly environment. Within about an hour of being at Access, I had already worked up two proposals to take back to my organization to take up some new work, so my confidence in its ability to refresh and invigorate my thinking was rewarded.

One small note about these jottings: some of these papers had co-authors or co-creators who were not at Access. When I take notes at conferences, I drop any collaborators not actually speaking, not to slight them, but because part of the reason I keep these notes is to help me connect names to faces and names to specific projects. Having a visual memory is a great way for me not to lose track of all the threads in my head.

The Trouble with Access

Kim Christen – Washington State U

The part of this talk that made a strong impression on me was when she walked through items in the Plateau Peoples’ Web Portal and showed the differences between standard museum or archival description and rich description provided via community curation Read more…

Digital Humanities 2016 Kraków

One interesting quirk of the DH conference is that so many talks are presented with a long list of collaborators in the program. It’s great to see collaborative work becoming more the norm. This isn’t new, but I seem to note an increase in this year over year. I’ve chosen just to list the person(s) who presented to keep my notes shorter and easier to read.

Wednesday, July 13

Thursday, July 14

Friday, July 15

Tuesday, July 12

Workshop – CWRC & Voyant Tools: Text Repository Meets Text Analysis

Susan Brown, U of Guelph; Stéfan Sinclair, McGill U; Geoffrey Rockwell, U of Alberta

Susan sketched a history of CWRC, which has its origins in the Orlando project (which dates from pre-XML days). I had no idea that CWRC used Islandora, but was happy to hear it. They’ve done custom module development for Islandora. Noted that a point of the workshop was to demonstrate how tools can be used in tandem, and are not always silos that don’t interoperate.

Unfortunately, the main server that powers CWRC went pear-shaped shortly before the conference, so it proved to be difficult to navigate and edit. Still, got a good overview of CWRC’s editing tool and its capabilities. Very keen to try it further when it’s released this fall in a hopefully stable version.

For Voyant, we worked from this tutorial. Generally speaking, I was too busy tinkering and learning to take notes. Interesting to note was the aside from the CWRC server’s crankiness, Voyant also bogged down at times when we were all hammering on it (~35 people in the room). Given Voyant’s success and wide use, hopefully there will be some scaling so that it performs well under heavy loads. Read more…

Full circle

I generally call this my ‘personal’ professional blog, but this post is purely personal. Our professional demeanor can be a bit of a mask of inscrutability. Sometimes it’s good to let it slip.

Back in fall 1992, I was staying with a good friend in Berlin. The summer previous I had worked as a cook at Sperry Chalet in the backcountry of Glacier National Park. Although back then I worked mainly to make money to travel, this particular trip only happened because I was going through a difficult and painful breakup–ah, young love–with my Czech girlfriend. We’d had it out in Prague within days of my arrival. Alas, to make the flight worthwhile, I had booked the return flight six weeks later. Heartsick and aimless, I went to Berlin since it’s a bit of a third or fourth home. Read more…